Overview

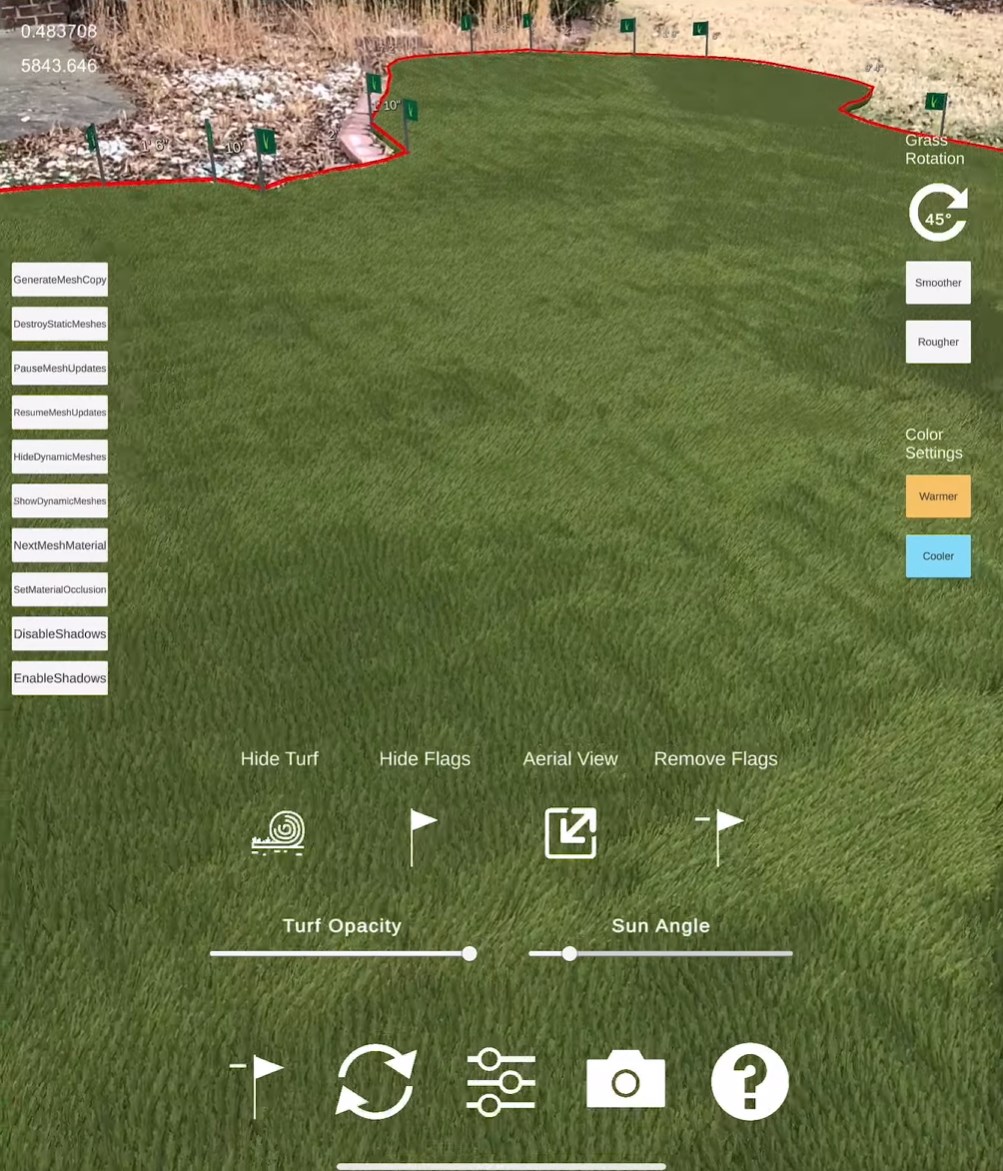

This experiment focused on making dense, stylized vegetation feel grounded in the real world instead of floating as a visual effect. The brief was simple: render a lot of grass on an iPad, keep it lit and reactive, and make it sit convincingly on top of scanned physical geometry.

Problem

Mobile AR scenes rarely leave room for dense geometry, dynamic shading, and convincing world alignment at the same time.

Focus

Push visual density without abandoning realtime responsiveness on iPad-class hardware.

Result

A grass system that feels materially present rather than composited on top of camera feed.

Challenge

The hard part was balancing fidelity and performance. Thousands of individual blades create a much better illusion than billboard-style patches, but they also multiply draw cost quickly. On top of that, AR content only looks believable when it respects the surfaces and orientation of the scanned space.

Approach

I treated the piece as a rendering study instead of a simple placement demo. The visual target was lush, readable grass with enough directional variation to avoid visible repetition, while the technical target was stable performance on a mobile device.

Implementation

I used GPU instancing so each blade could render as a 3D object with dynamic lighting without blowing the iPad frame budget. LiDAR scan data handled surface alignment, which kept the grass tied to the physical room instead of hovering over the camera feed.

Images can be used to create different patterns in the grass direction, with the alpha value determining the rotation of that “pixel” of grass. That gave the system an art-directable control layer instead of locking the look to purely procedural noise.

Media

The demo shows the important part: density and anchoring in motion. The realism comes less from one clever shader and more from orientation, lighting, and placement all staying disciplined.

Outcome

The result is a mobile AR rendering pattern: use instancing where density matters, use LiDAR where grounding matters, and keep enough authored control in the pipeline to shape the final motion and patterning.